I am working on a vocabulary-building book for SAT and GRE students. Below is a picture of the provisional cover of the book:

In order to have a wide corpus of classical texts to find word usage examples, I downloaded a massive ebook collection from the Gutenberg project and merged all of the text files into one big file that reached 12.4 gigabytes in size. I then wrote a PHP script that used the grep utility to search through about 250 billion lines of text1 to find the word usages I needed.

Here is an example of the results for the word “taciturn”:

In order to find interesting examples, I use the following regular expression:

egrep -aRh -B 20 -A 20 "\b(she|her|hers|his|he)\b.*taciturn" merged.txt

This finds usages of the word that start with a pronoun such as “she”. This helps find usages that occur mostly in novels, rather than other types of books (the Gutenberg collection contains many non-novel files, such as encyclopedias and legal works).

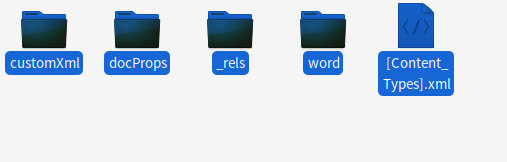

My first step toward speeding up the grep was to move the file to an old SSD I have that is attached to my desktop. The SSD supports up to 200 MB/second read speeds. This was not good enough, so I eventually moved it to my main Samsung SSD which has over 500 MB/second read speeds. Below is a screenshot of the iotop utility reporting a read speed of 447 M/s while grep is running:

My first idea to speed up the grep was to use GNU parallel or xargs, both of which allow grep to make use of multiple CPU cores. This was misguided since the limiting factor in this grep was not CPU usage but disk usage. Since my SSD is being maxed out, there is no point in adding more CPU cores to the task.

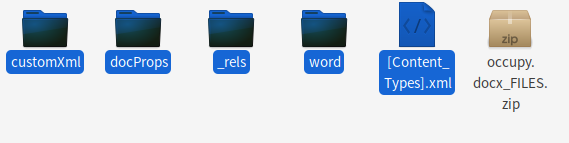

Using the following grep command, it took a little over 30 seconds to finish grepping the entire file once:

Here is the output for the time command which tells how long a command takes to finish:

One of the first suggestions I found is to prefix the command with LC_ALL=C, this tells grep to avoid searching through non-ANSI-C characters.

That seemed to make the grep very slightly faster:

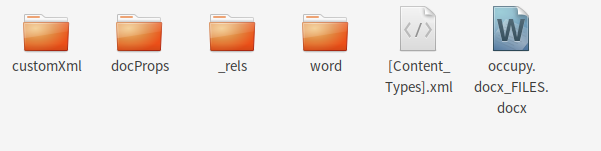

Just to see what happens, I next used the fmt utility to reformat the file. The file currently is made up of short lines all separated by new lines. Using fmt, I changed it to having lines of 500 characters each. This was likely going to make the grep slower since it was going to match a lot more lines since the lines were going to be longer:

But on the upside, I was going to get a lot more results. The fmt command decreased the number of lines from 246 million to only 37 million:

But actually what happened when I did the next grep was that the grep time decreased to only 23 seconds:

I guess the reason is that grep has a lot fewer lines to go through.

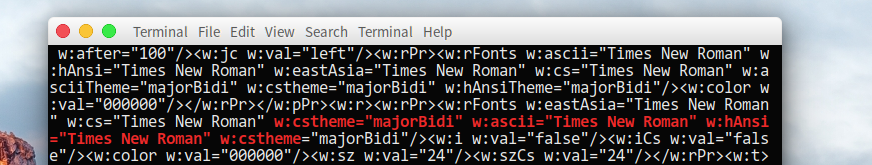

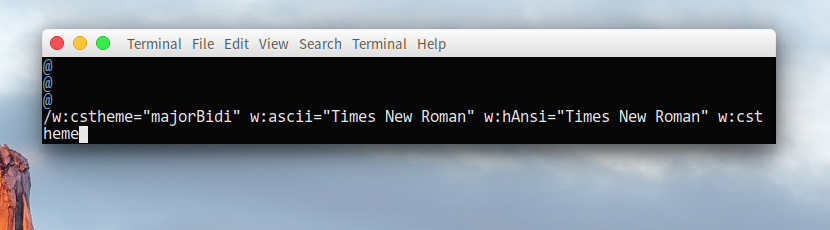

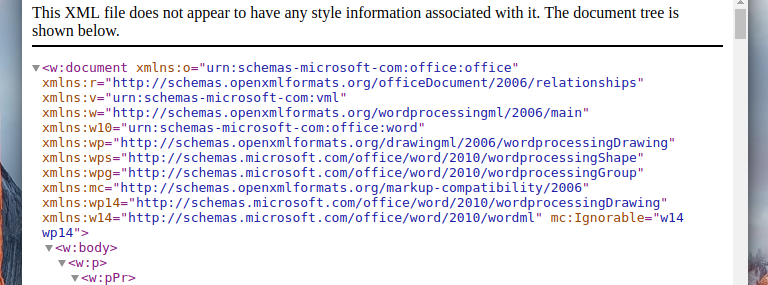

Unfortunately it looked like fmt had corrupted the text. Here is an example:

I think the reason was that some (or most, or all) of the text files were using Windows-style newlines rather than Unix-style ones which was perhaps confusing fmt. So I used this command to convert all Windows-style newlines into spaces:

After that operation and running fmt again on the result, grepping again seems to result in non-corrupt results:

And:

I also looked for the corrupted passage above to see how it looked now:

So it all seems fine now.

As far as I know there is no way to speed up the grep significantly further unless I get a lot of RAM and do the grep on a ramdisk, or get a much faster SSD. Just out of curiosity I decided to try out changing the fmt command to make lines of 1500 characters each to see how that affects the grep:

That didn’t actually do anything to speed up the grep further: